Badge

[ Read the Docs ]

Code and data for the following works:

- [ICLR 2025] SWE-bench Multimodal: Do AI Systems Generalize to Visual Software Domains?

- [ICLR 2024 Oral] SWE-bench: Can Language Models Resolve Real-World GitHub Issues?

📰 News

- [Jan. 13, 2025]: We've integrated SWE-bench Multimodal (paper, dataset) into this repository! Unlike SWE-bench, we've kept evaluation for the test split private. Submit to the leaderboard using sb-cli, our new cloud-based evaluation tool.

- [Jan. 11, 2025]: Thanks to Modal, you can now run evaluations entirely on the cloud! See here for more details.

- [Aug. 13, 2024]: Introducing SWE-bench Verified! Part 2 of our collaboration with OpenAI Preparedness. A subset of 500 problems that real software engineers have confirmed are solvable. Check out more in the report!

- [Jun. 27, 2024]: We have an exciting update for SWE-bench - with support from OpenAI's Preparedness team: We're moving to a fully containerized evaluation harness using Docker for more reproducible evaluations! Read more in our report.

- [Apr. 2, 2024]: We have released SWE-agent, which sets the state-of-the-art on the full SWE-bench test set! (Tweet 🔗)

- [Jan. 16, 2024]: SWE-bench has been accepted to ICLR 2024 as an oral presentation! (OpenReview 🔗)

👋 Overview

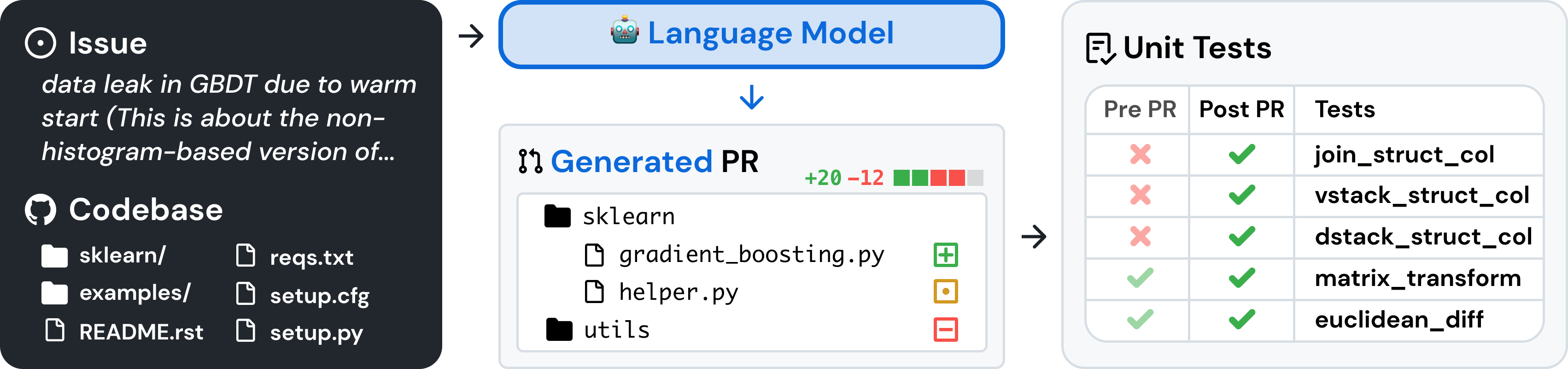

SWE-bench is a benchmark for evaluating large language models on real world software issues collected from GitHub. Given a codebase and an issue, a language model is tasked with generating a patch that resolves the described problem.

To access SWE-bench, copy and run the following code:

from datasets import load_dataset

swebench = load_dataset('princeton-nlp/SWE-bench', split='test')

🚀 Set Up

SWE-bench uses Docker for reproducible evaluations. Follow the instructions in the Docker setup guide to install Docker on your machine. If you're setting up on Linux, we recommend seeing the post-installation steps as well.

Finally, to build SWE-bench from source, follow these steps:

git clone git@github.com:princeton-nlp/SWE-bench.git

cd SWE-bench

pip install -e .

Test your installation by running:

python -m swebench.harness.run_evaluation \

--predictions_path gold \

--max_workers 1 \

--instance_ids sympy__sympy-20590 \

--run_id validate-gold

💽 Usage

Evaluate patch predictions on SWE-bench Lite with the following command:

python -m swebench.harness.run_evaluation \

--dataset_name princeton-nlp/SWE-bench_Lite \

--predictions_path <path_to_predictions> \

--max_workers <num_workers> \

--run_id <run_id>

# use --predictions_path 'gold' to verify the gold patches

# use --run_id to name the evaluation run

This command will generate docker build logs (logs/build_images) and evaluation logs (logs/run_evaluation) in the current directory.

The final evaluation results will be stored in the evaluation_results directory.

[!WARNING] SWE-bench evaluation can be resource intensive We recommend running on an

x86_64machine with at least 120GB of free storage, 16GB of RAM, and 8 CPU cores. We recommend using fewer thanmin(0.75 * os.cpu_count(), 24)for--max_workers.If running with Docker desktop, make sure to increase your virtual disk space to ~120 free GB. Set max_workers to be consistent with the above for the CPUs available to Docker.

Support for

arm64machines is experimental.

To see the full list of arguments for the evaluation harness, run:

python -m swebench.harness.run_evaluation --help

See the evaluation tutorial for the full rundown on datasets you can evaluate. If you're looking for non-local, cloud based evaluations, check out...

- sb-cli, our tool for running evaluations automatically on AWS, or...

- Running SWE-bench evaluation on Modal. Details here

Additionally, you can also:

- Train your own models on our pre-processed datasets.

- Run inference on existing models (both local and API models). The inference step is where you give the model a repo + issue and have it generate a fix.

- Run SWE-bench's data collection procedure (tutorial) on your own repositories, to make new SWE-Bench tasks.

- ⚠️ We are temporarily pausing support for queries around creating SWE-bench instances. Please see the note in the tutorial.

⬇️ Downloads

💫 Contributions

We would love to hear from the broader NLP, Machine Learning, and Software Engineering research communities, and we welcome any contributions, pull requests, or issues! To do so, please either file a new pull request or issue and fill in the corresponding templates accordingly. We'll be sure to follow up shortly!

Contact person: Carlos E. Jimenez and John Yang (Email: carlosej@princeton.edu, johnby@stanford.edu).

✍️ Citation

If you find our work helpful, please use the following citations.

@inproceedings{

jimenez2024swebench,

title={{SWE}-bench: Can Language Models Resolve Real-world Github Issues?},

author={Carlos E Jimenez and John Yang and Alexander Wettig and Shunyu Yao and Kexin Pei and Ofir Press and Karthik R Narasimhan},

booktitle={The Twelfth International Conference on Learning Representations},

year={2024},

url={https://openreview.net/forum?id=VTF8yNQM66}

}

@inproceedings{

yang2024swebenchmultimodal,

title={{SWE}-bench Multimodal: Do AI Systems Generalize to Visual Software Domains?},

author={John Yang and Carlos E. Jimenez and Alex L. Zhang and Kilian Lieret and Joyce Yang and Xindi Wu and Ori Press and Niklas Muennighoff and Gabriel Synnaeve and Karthik R. Narasimhan and Diyi Yang and Sida I. Wang and Ofir Press},

booktitle={The Thirteenth International Conference on Learning Representations},

year={2025},

url={https://openreview.net/forum?id=riTiq3i21b}

}

🪪 License

MIT. Check LICENSE.md.